- Beranda

- Programmer Forum

[ASK] Konsep Backpropagation

...

TS

Pangeransalju

[ASK] Konsep Backpropagation

Permisi suhu semuanya, sebelumnya ane minta maaf kalo ane ada salah dalam bertanya atau dalam menangkap materi

Ane pilih tanya di sini karena ane merasa benar-benar buntu dan gak nemu solusinya

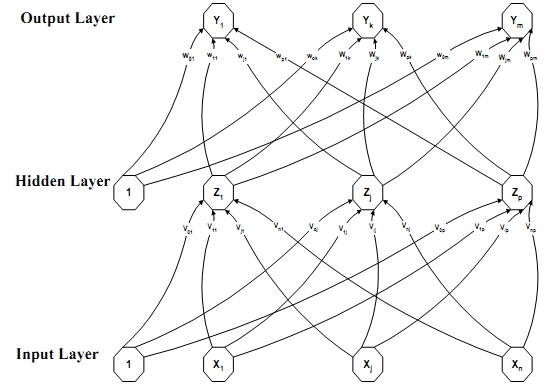

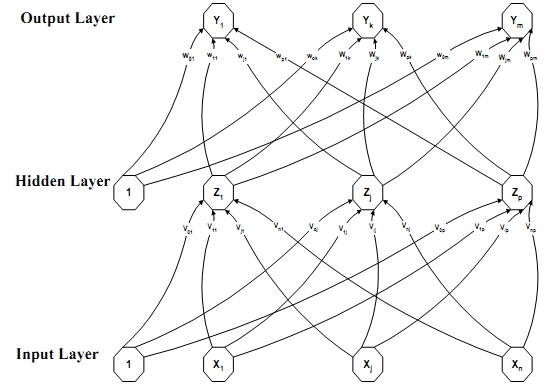

Sebelumnya ada baiknya ane tampilin gambar konsep backpropagation yang ane dapet dari mbah gugel

Sebelumnya ane jelaskan kasus yang ane hadapin ya gan

Setelah ane punya kasusnya, ane browsing di google. 2 keyword yang ane pake :

keyword pertama, jelas mengacu ke materi berbahasa Indonesia, sedangkan keyword kedua, mengacu ke source code berbahasa Inggris.

Nah yang ane bingung seperti ini gan :

delta yang ane maksud di sini itu faktor kesalahan unit keluaran CMIIW

Dan yang ane tanyakan adalah :

Mohon maaf kalo berantakan gan, mohon bimbingannya

Ini source code yang ane dapetin gan, maaf kalo ane salah baca source codenya

Ane pilih tanya di sini karena ane merasa benar-benar buntu dan gak nemu solusinya

Sebelumnya ada baiknya ane tampilin gambar konsep backpropagation yang ane dapet dari mbah gugel

Spoiler for BP:

Sebelumnya ane jelaskan kasus yang ane hadapin ya gan

Quote:

Ane mau bikin sistem prediksi dengan 2 Input dan menghasilkan 1 Output.

Contohnya :

1,1 -> 1

1,2 -> 2

2,1 -> 2

2,2 -> 4

Contohnya :

1,1 -> 1

1,2 -> 2

2,1 -> 2

2,2 -> 4

Setelah ane punya kasusnya, ane browsing di google. 2 keyword yang ane pake :

Quote:

1. Konsep backpropagation, contoh perhitungan backpropagation

2. PHP backpropagation

2. PHP backpropagation

keyword pertama, jelas mengacu ke materi berbahasa Indonesia, sedangkan keyword kedua, mengacu ke source code berbahasa Inggris.

Nah yang ane bingung seperti ini gan :

Quote:

1. Materi berbahasa indonesia, menjelaskan bahwa inputmasuk ke golongan layer X. Di kasus ane input berarti -> 1,1 ; 1,2 ; 2,1 ; 2,2.

dan target hanya dihitung pada perhitungan delta.

2. Source code berbahasa Inggris, setelah ane artikan, memasukkan semuanya ke layer X, dan target tetap terhitung sebagai target untuk perhitungan delta. Di kasus ane berarti semuanya dimasukkan ke layer x -> 1,1->1 ; 1,2->2 ; 2,1->2 ; 2,2->4

dan target hanya dihitung pada perhitungan delta.

2. Source code berbahasa Inggris, setelah ane artikan, memasukkan semuanya ke layer X, dan target tetap terhitung sebagai target untuk perhitungan delta. Di kasus ane berarti semuanya dimasukkan ke layer x -> 1,1->1 ; 1,2->2 ; 2,1->2 ; 2,2->4

delta yang ane maksud di sini itu faktor kesalahan unit keluaran CMIIW

Dan yang ane tanyakan adalah :

Quote:

1. sebenarnya mana yang benar?

Karena ane sudah gunakan konsep yang berbahasa Indonesia dan akurasinya ga bisa lebih dari 50%

FYI, normalisasi ane berbeda untuk input dan target.

di contoh yang ane kasih itu, normalisasi input dibagi dengan nilai maksimal = 2.

dan normalisasi target dibagi dengan nilai maksimal target = 4.

2. Konsep normalisasi, apakah dilakukan per satu set data (1,1->1 ; 1,2->2 ; 2,1->2 ; 2,2->4), ataukah normalisasi input dan normalisasi target dibedakan?

Karena ane sudah gunakan konsep yang berbahasa Indonesia dan akurasinya ga bisa lebih dari 50%

FYI, normalisasi ane berbeda untuk input dan target.

di contoh yang ane kasih itu, normalisasi input dibagi dengan nilai maksimal = 2.

dan normalisasi target dibagi dengan nilai maksimal target = 4.

2. Konsep normalisasi, apakah dilakukan per satu set data (1,1->1 ; 1,2->2 ; 2,1->2 ; 2,2->4), ataukah normalisasi input dan normalisasi target dibedakan?

Mohon maaf kalo berantakan gan, mohon bimbingannya

Ini source code yang ane dapetin gan, maaf kalo ane salah baca source codenya

Spoiler for coding:

<?php

/**

* file: class.BackPropagationScale.php

*

* Using artificial intelligence and NN (neuronal networks)

* to solve the multiplication table problem.

*

* It uses a technique called back propagation, that is

* the network learns by calculating the errors going

* backwards: from the output, through the first hidden layers.

*

* The learning unit is called the perceptron: a neuron capable

* of learning, connected to layers and adjusting the weights.

*

* Data needs to be scaled/unscaled to adjust the values common to

* the neural network

*

* Free for educational purposes

* Copyright © 2010

*

* @author freedelta ( http://freedelta.free.fr)

*/

error_reporting(E_ERROR);

define("_RAND_MAX",32767);

define("HI",0.9);

define("LO",0.1);

class BackPropagationScale

{

/* Output of each neuron */

public $output=null;

/* Last calcualted output value */

public $vectorOutput=null;

/* delta error value for each neuron */

public $delta=null;

/* Array of weights for each neuron */

public $weight=null;

/* Num of layers in the net, including input layer */

public $numLayers=null;

/* Array num elments containing size for each layer */

public $layersSize=null;

/* Learning rate */

public $beta=null;

/* Momentum */

public $alpha=null;

/* Storage for weight-change made in previous epoch (three-dimensional array) */

public $prevDwt=null;

/* Data */

public $data=null;

/* Test Data */

public $testData=null;

/* N lines of Data */

public $NumPattern=null;

/* N columns in Data */

public $NumInput=null;

/* Minimum value in data set */

public $minX=0;

/* Maximum value in data set */

public $maxX=1;

/* Stores ann scale calculated parameters */

public $normalizeMax=null;

public $normalizeMin=null;

/* Holds all output data in one array */

public $output_vector=null;

public function __construct($numLayers,$layersSize,$beta,$alpha,$minX,$maxX)

{

$this->alpha=$alpha;

$this->beta=$beta;

$this->minX=$minX;

$this->maxX=$maxX;

// Set no of layers and their sizes

$this->numLayers=$numLayers;

$this->layersSize=$layersSize;

// seed and assign random weights

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1]+1;$k++)

{

$this->weight[$i][$j][$k]=$this->rando();

}

// bias in the last neuron

$this->weight[$i][$j][$this->layersSize[$i-1]]=-1;

}

}

// initialize previous weights to 0 for first iteration

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1]+1;$k++)

{

$this->prevDwt[$i][$j][$k]=(double)0.0;

}

}

}

}

public function rando()

{

$randValue = LO + (HI - LO) * mt_rand(0, _RAND_MAX)/_RAND_MAX;

return $randValue;//32767

}

/* --- sigmoid function */

public function sigmoid($inputSource)

{

return (double)(1.0 / (1.0 + exp(-$inputSource)));

}

/* --- mean square error */

public function mse($target)

{

$mse=0;

for($i=0;$i<$this->layersSize[$this->numLayers-1];$i++)

{

$mse+=($target-$this->output[$this->numLayers-1][$i])*($target-$this->output[$this->numLayers-1][$i]);

}

return $mse/2;

}

/* --- returns i'th outputput of the net */

public function Out($i)

{

return $this->output[$this->numLayers-1][$i];

}

/* ---

* Feed forward one set of input

* to update the output values for each neuron. This function takes the input

* to the net and finds the output of each neuron

*/

public function ffwd($inputSource)

{

$sum=0.0;

$numElem=count($inputSource);

// assign content to input layer

for($i=0;$i<$numElem;$i++)

{

$this->output[0][$i]=$inputSource[$i]; // outputput_from_neuron(i,j) Jth neuron in Ith Layer

}

// assign output (activation) value to each neuron usng sigmoid func

for($i=1;$i<$this->numLayers;$i++) // For each layer

{

for($j=0;$j<$this->layersSize[$i];$j++) // For each neuron in current layer

{

$sum=0.0;

for($k=0;$k<$this->layersSize[$i-1];$k++) // For each input from each neuron in preceeding layer

{

$sum+=$this->output[$i-1][$k]*$this->weight[$i][$j][$k]; // Apply weight to inputs and add to sum

}

// Apply bias

$sum+=$this->weight[$i][$j][$this->layersSize[$i-1]];

// Apply sigmoid function

$this->output[$i][$j]=$this->sigmoid($sum);

}

}

}

/* --- Backpropagate errors from outputput layer back till the first hidden layer */

public function bpgt($inputSource,$target)

{

/* --- Update the output values for each neuron */

$this->ffwd($inputSource);

///////////////////////////////////////////////

/// FIND DELTA FOR OUPUT LAYER (Last Layer) ///

///////////////////////////////////////////////

for($i=0;$i<$this->layersSize[$this->numLayers-1];$i++)

{

$this->delta[$this->numLayers-1][$i]=$this->output[$this->numLayers-1][$i]*(1-$this->output[$this->numLayers-1][$i])*($target-$this->output[$this->numLayers-1][$i]);

}

/////////////////////////////////////////////////////////////////////////////////////////////

/// FIND DELTA FOR HIDDEN LAYERS (From Last Hidden Layer BACKWARDS To First Hidden Layer) ///

/////////////////////////////////////////////////////////////////////////////////////////////

for($i=$this->numLayers-2;$i>0;$i--)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

$sum=0.0;

for($k=0;$k<$this->layersSize[$i+1];$k++)

{

$sum+=$this->delta[$i+1][$k]*$this->weight[$i+1][$k][$j];

}

$this->delta[$i][$j]=$this->output[$i][$j]*(1-$this->output[$i][$j])*$sum;

}

}

////////////////////////

/// MOMENTUM (Alpha) ///

////////////////////////

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1];$k++)

{

$this->weight[$i][$j][$k]+=$this->alpha*$this->prevDwt[$i][$j][$k];

}

$this->weight[$i][$j][$this->layersSize[$i-1]]+=$this->alpha*$this->prevDwt[$i][$j][$this->layersSize[$i-1]];

}

}

///////////////////////////////////////////////

/// ADJUST WEIGHTS (Using Steepest Descent) ///

///////////////////////////////////////////////

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1];$k++)

{

$this->prevDwt[$i][$j][$k]=$this->beta*$this->delta[$i][$j]*$this->output[$i-1][$k];

$this->weight[$i][$j][$k]+=$this->prevDwt[$i][$j][$k];

}

/* --- Apply the corrections */

$this->prevDwt[$i][$j][$this->layersSize[$i-1]]=$this->beta*$this->delta[$i][$j];

$this->weight[$i][$j][$this->layersSize[$i-1]]+=$this->prevDwt[$i][$j][$this->layersSize[$i-1]];

}

}

}

///////////////////////////////

/// SCALING FUNCTIONS BLOCK ///

///////////////////////////////

/* --- Set scaling parameters */

public function setScaleOutput($data)

{

$oldMin=$data[0][0];

$oldMax=$oldMin;

$numElem=count($data[0]);

/* --- First calcualte minimum and maximum */

for($i=0;$i<$this->NumPattern;$i++)

{

$oldMin=$data[$i][0];

$oldMax=$oldMin;

for($j=1;$j<$numElem;$j++)

{

// Min

if($oldMin > $data[$i][$j])

{

$oldMin=$data[$i][$j];

}

// Max

if($oldMax < $data[$i][$j])

{

$oldMax=$data[$i][$j];

}

}

$this->normalizeMin[$i]=$oldMin;

$this->normalizeMax[$i]=$oldMax;

}

}

/* --- Scale input data to range before feeding it to the network */

/*

x - Min

t = (HI -LO) * (---------) + LO

Max-Min

*/

public function scale($data)

{

$this->setScaleOutput($data);

$numElem=count($data[0]);

$temp=0.0;

for( $i=0; $i < $this->NumPattern; $i++ )

{

for($j=0;$j<$numElem;$j++)

{

$temp=(HI-LO)*(($data[$i][$j] - $this->normalizeMin[$i]) / ($this->normalizeMax[$i] - $this->normalizeMin[$i])) + LO;

$data[$i][$j]=$temp;

}

}

return $data;

}

/* --- Unscale output data to original range */

/*

x - LO

t = (Max-Min) * (---------) + Min

HI-LO

*/

public function unscaleOutput($output_vector)

{

$temp=0.0;

for( $i=0; $i < $this->NumPattern; $i++ )

{

$temp=($this->normalizeMax[$i]-$this->normalizeMin[$i]) * (($output_vector[$i] - LO) / (HI-LO)) + $this->normalizeMin[$i] ;

$unscaledVector[$i] =$temp;

}

return $unscaledVector;

}

public function Run($dataX,$testDataX)

{

/* --- Threshhold - thresh (value of target mse, training stops once it is achieved) */

$Thresh = 0.00001;

$numEpoch = 200000;

$MSE=0.0;

$this->NumPattern=count($dataX);

$this->NumInput=count($dataX[0]);

/* --- Pre-process data: Scale input and test values */

$data=$this->scale($dataX);

/* --- Test data=(data-1 column) */

for($i=0;$i<$this->NumPattern;$i++)

{

for($j=0;$j<$this->NumInput-1;$j++)

{

$testData[$i][$j]=$data[$i][$j];

}

}

/* --- Start training: looping through epochs and exit when MSE error < Threshold */

echo "\nNow training the network....";

for($e=0;$e<$numEpoch;$e++)

{

/* -- Backpropagate */

$this->bpgt($data[$e%$this->NumPattern],$data[$e%$this->NumPattern][$this->NumInput-1]);

$MSE=$this->mse($data[$e%$this->NumPattern][$this->NumInput-1]);

if($e==0)

{

echo "\nFirst epoch Mean Square Error: $MSE";

}

if( $MSE < $Thresh)

{

echo "\nNetwork Trained. Threshold value achieved in ".$e." iterations.";

echo "\nMSE: ".$MSE;

break;

}

}

echo "\nLast epoch Mean Square Error: $MSE";

echo "\nNow using the trained network to make predictions on test data....\n";

for ($i = 0 ; $i < $this->NumPattern; $i++ )

{

$this->ffwd($testData[$i]);

$this->vectorOutput[]=(double)$this->Out(0);

}

$out=$this->unscaleOutput($this->vectorOutput);

for($col=1;$col<$this->NumInput;$col++)

{

echo "Input$col\t";

}

echo "Predicted \n";

for ($i = 0 ; $i < $this->NumPattern; $i++ )

{

for($j=0;$j<$this->NumInput-1;$j++)

{

echo " ".$testDataX[$i][$j]." \t\t";

}

echo " " .abs($out[$i])."\n";

}

}

}

/* --- Sample use */

// Mutliplication data: 1 x 1 = 1, 1 x 2 = 2,.. etc

$data=array(0=>array(1,1,1),

1=>array(1,2,2),

2=>array(1,3,3),

3=>array(1,4,4),

4=>array(1,5,5),

5=>array(2,1,2),

6=>array(2,2,4),

7=>array(2,3,6),

8=>array(2,4,8),

9=>array(2,5,10),

10=>array(3,1,3),

11=>array(3,2,6),

12=>array(3,3,9),

13=>array(3,4,12),

14=>array(3,5,15),

15=>array(4,1,4),

16=>array(4,2,8),

17=>array(4,3,12),

18=>array(4,4,16),

19=>array(4,5,20),

20=>array(5,1,5),

21=>array(5,2,10),

22=>array(5,3,15),

23=>array(5,4,20),

24=>array(5,5,25)

);

// 1 x 1 =?

$testData=array(0=>array(1,1),

1=>array(1,2),

2=>array(1,3),

3=>array(1,4),

4=>array(1,5),

5=>array(2,1),

6=>array(2,2),

7=>array(2,3),

8=>array(2,4),

9=>array(2,5),

10=>array(3,1),

11=>array(3,2),

12=>array(3,3),

13=>array(3,4),

14=>array(3,5),

15=>array(4,1),

16=>array(4,2),

17=>array(4,3),

18=>array(4,4),

19=>array(4,5),

20=>array(5,1),

21=>array(5,2),

22=>array(5,3),

23=>array(5,4),

24=>array(5,5)

);

$layersSize=array(3,2,1);

$numLayers = count($layersSize);

// Learing rate - beta

// momentum - alpha

$beta = 0.3;

$alpha = 0.1;

$minX=1;

$maxX=25;

// Creating the net

$bp=new BackPropagationScale($numLayers,$layersSize,$beta,$alpha,$minX,$maxX);

$bp->Run($data,$testData);

?>

/**

* file: class.BackPropagationScale.php

*

* Using artificial intelligence and NN (neuronal networks)

* to solve the multiplication table problem.

*

* It uses a technique called back propagation, that is

* the network learns by calculating the errors going

* backwards: from the output, through the first hidden layers.

*

* The learning unit is called the perceptron: a neuron capable

* of learning, connected to layers and adjusting the weights.

*

* Data needs to be scaled/unscaled to adjust the values common to

* the neural network

*

* Free for educational purposes

* Copyright © 2010

*

* @author freedelta ( http://freedelta.free.fr)

*/

error_reporting(E_ERROR);

define("_RAND_MAX",32767);

define("HI",0.9);

define("LO",0.1);

class BackPropagationScale

{

/* Output of each neuron */

public $output=null;

/* Last calcualted output value */

public $vectorOutput=null;

/* delta error value for each neuron */

public $delta=null;

/* Array of weights for each neuron */

public $weight=null;

/* Num of layers in the net, including input layer */

public $numLayers=null;

/* Array num elments containing size for each layer */

public $layersSize=null;

/* Learning rate */

public $beta=null;

/* Momentum */

public $alpha=null;

/* Storage for weight-change made in previous epoch (three-dimensional array) */

public $prevDwt=null;

/* Data */

public $data=null;

/* Test Data */

public $testData=null;

/* N lines of Data */

public $NumPattern=null;

/* N columns in Data */

public $NumInput=null;

/* Minimum value in data set */

public $minX=0;

/* Maximum value in data set */

public $maxX=1;

/* Stores ann scale calculated parameters */

public $normalizeMax=null;

public $normalizeMin=null;

/* Holds all output data in one array */

public $output_vector=null;

public function __construct($numLayers,$layersSize,$beta,$alpha,$minX,$maxX)

{

$this->alpha=$alpha;

$this->beta=$beta;

$this->minX=$minX;

$this->maxX=$maxX;

// Set no of layers and their sizes

$this->numLayers=$numLayers;

$this->layersSize=$layersSize;

// seed and assign random weights

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1]+1;$k++)

{

$this->weight[$i][$j][$k]=$this->rando();

}

// bias in the last neuron

$this->weight[$i][$j][$this->layersSize[$i-1]]=-1;

}

}

// initialize previous weights to 0 for first iteration

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1]+1;$k++)

{

$this->prevDwt[$i][$j][$k]=(double)0.0;

}

}

}

}

public function rando()

{

$randValue = LO + (HI - LO) * mt_rand(0, _RAND_MAX)/_RAND_MAX;

return $randValue;//32767

}

/* --- sigmoid function */

public function sigmoid($inputSource)

{

return (double)(1.0 / (1.0 + exp(-$inputSource)));

}

/* --- mean square error */

public function mse($target)

{

$mse=0;

for($i=0;$i<$this->layersSize[$this->numLayers-1];$i++)

{

$mse+=($target-$this->output[$this->numLayers-1][$i])*($target-$this->output[$this->numLayers-1][$i]);

}

return $mse/2;

}

/* --- returns i'th outputput of the net */

public function Out($i)

{

return $this->output[$this->numLayers-1][$i];

}

/* ---

* Feed forward one set of input

* to update the output values for each neuron. This function takes the input

* to the net and finds the output of each neuron

*/

public function ffwd($inputSource)

{

$sum=0.0;

$numElem=count($inputSource);

// assign content to input layer

for($i=0;$i<$numElem;$i++)

{

$this->output[0][$i]=$inputSource[$i]; // outputput_from_neuron(i,j) Jth neuron in Ith Layer

}

// assign output (activation) value to each neuron usng sigmoid func

for($i=1;$i<$this->numLayers;$i++) // For each layer

{

for($j=0;$j<$this->layersSize[$i];$j++) // For each neuron in current layer

{

$sum=0.0;

for($k=0;$k<$this->layersSize[$i-1];$k++) // For each input from each neuron in preceeding layer

{

$sum+=$this->output[$i-1][$k]*$this->weight[$i][$j][$k]; // Apply weight to inputs and add to sum

}

// Apply bias

$sum+=$this->weight[$i][$j][$this->layersSize[$i-1]];

// Apply sigmoid function

$this->output[$i][$j]=$this->sigmoid($sum);

}

}

}

/* --- Backpropagate errors from outputput layer back till the first hidden layer */

public function bpgt($inputSource,$target)

{

/* --- Update the output values for each neuron */

$this->ffwd($inputSource);

///////////////////////////////////////////////

/// FIND DELTA FOR OUPUT LAYER (Last Layer) ///

///////////////////////////////////////////////

for($i=0;$i<$this->layersSize[$this->numLayers-1];$i++)

{

$this->delta[$this->numLayers-1][$i]=$this->output[$this->numLayers-1][$i]*(1-$this->output[$this->numLayers-1][$i])*($target-$this->output[$this->numLayers-1][$i]);

}

/////////////////////////////////////////////////////////////////////////////////////////////

/// FIND DELTA FOR HIDDEN LAYERS (From Last Hidden Layer BACKWARDS To First Hidden Layer) ///

/////////////////////////////////////////////////////////////////////////////////////////////

for($i=$this->numLayers-2;$i>0;$i--)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

$sum=0.0;

for($k=0;$k<$this->layersSize[$i+1];$k++)

{

$sum+=$this->delta[$i+1][$k]*$this->weight[$i+1][$k][$j];

}

$this->delta[$i][$j]=$this->output[$i][$j]*(1-$this->output[$i][$j])*$sum;

}

}

////////////////////////

/// MOMENTUM (Alpha) ///

////////////////////////

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1];$k++)

{

$this->weight[$i][$j][$k]+=$this->alpha*$this->prevDwt[$i][$j][$k];

}

$this->weight[$i][$j][$this->layersSize[$i-1]]+=$this->alpha*$this->prevDwt[$i][$j][$this->layersSize[$i-1]];

}

}

///////////////////////////////////////////////

/// ADJUST WEIGHTS (Using Steepest Descent) ///

///////////////////////////////////////////////

for($i=1;$i<$this->numLayers;$i++)

{

for($j=0;$j<$this->layersSize[$i];$j++)

{

for($k=0;$k<$this->layersSize[$i-1];$k++)

{

$this->prevDwt[$i][$j][$k]=$this->beta*$this->delta[$i][$j]*$this->output[$i-1][$k];

$this->weight[$i][$j][$k]+=$this->prevDwt[$i][$j][$k];

}

/* --- Apply the corrections */

$this->prevDwt[$i][$j][$this->layersSize[$i-1]]=$this->beta*$this->delta[$i][$j];

$this->weight[$i][$j][$this->layersSize[$i-1]]+=$this->prevDwt[$i][$j][$this->layersSize[$i-1]];

}

}

}

///////////////////////////////

/// SCALING FUNCTIONS BLOCK ///

///////////////////////////////

/* --- Set scaling parameters */

public function setScaleOutput($data)

{

$oldMin=$data[0][0];

$oldMax=$oldMin;

$numElem=count($data[0]);

/* --- First calcualte minimum and maximum */

for($i=0;$i<$this->NumPattern;$i++)

{

$oldMin=$data[$i][0];

$oldMax=$oldMin;

for($j=1;$j<$numElem;$j++)

{

// Min

if($oldMin > $data[$i][$j])

{

$oldMin=$data[$i][$j];

}

// Max

if($oldMax < $data[$i][$j])

{

$oldMax=$data[$i][$j];

}

}

$this->normalizeMin[$i]=$oldMin;

$this->normalizeMax[$i]=$oldMax;

}

}

/* --- Scale input data to range before feeding it to the network */

/*

x - Min

t = (HI -LO) * (---------) + LO

Max-Min

*/

public function scale($data)

{

$this->setScaleOutput($data);

$numElem=count($data[0]);

$temp=0.0;

for( $i=0; $i < $this->NumPattern; $i++ )

{

for($j=0;$j<$numElem;$j++)

{

$temp=(HI-LO)*(($data[$i][$j] - $this->normalizeMin[$i]) / ($this->normalizeMax[$i] - $this->normalizeMin[$i])) + LO;

$data[$i][$j]=$temp;

}

}

return $data;

}

/* --- Unscale output data to original range */

/*

x - LO

t = (Max-Min) * (---------) + Min

HI-LO

*/

public function unscaleOutput($output_vector)

{

$temp=0.0;

for( $i=0; $i < $this->NumPattern; $i++ )

{

$temp=($this->normalizeMax[$i]-$this->normalizeMin[$i]) * (($output_vector[$i] - LO) / (HI-LO)) + $this->normalizeMin[$i] ;

$unscaledVector[$i] =$temp;

}

return $unscaledVector;

}

public function Run($dataX,$testDataX)

{

/* --- Threshhold - thresh (value of target mse, training stops once it is achieved) */

$Thresh = 0.00001;

$numEpoch = 200000;

$MSE=0.0;

$this->NumPattern=count($dataX);

$this->NumInput=count($dataX[0]);

/* --- Pre-process data: Scale input and test values */

$data=$this->scale($dataX);

/* --- Test data=(data-1 column) */

for($i=0;$i<$this->NumPattern;$i++)

{

for($j=0;$j<$this->NumInput-1;$j++)

{

$testData[$i][$j]=$data[$i][$j];

}

}

/* --- Start training: looping through epochs and exit when MSE error < Threshold */

echo "\nNow training the network....";

for($e=0;$e<$numEpoch;$e++)

{

/* -- Backpropagate */

$this->bpgt($data[$e%$this->NumPattern],$data[$e%$this->NumPattern][$this->NumInput-1]);

$MSE=$this->mse($data[$e%$this->NumPattern][$this->NumInput-1]);

if($e==0)

{

echo "\nFirst epoch Mean Square Error: $MSE";

}

if( $MSE < $Thresh)

{

echo "\nNetwork Trained. Threshold value achieved in ".$e." iterations.";

echo "\nMSE: ".$MSE;

break;

}

}

echo "\nLast epoch Mean Square Error: $MSE";

echo "\nNow using the trained network to make predictions on test data....\n";

for ($i = 0 ; $i < $this->NumPattern; $i++ )

{

$this->ffwd($testData[$i]);

$this->vectorOutput[]=(double)$this->Out(0);

}

$out=$this->unscaleOutput($this->vectorOutput);

for($col=1;$col<$this->NumInput;$col++)

{

echo "Input$col\t";

}

echo "Predicted \n";

for ($i = 0 ; $i < $this->NumPattern; $i++ )

{

for($j=0;$j<$this->NumInput-1;$j++)

{

echo " ".$testDataX[$i][$j]." \t\t";

}

echo " " .abs($out[$i])."\n";

}

}

}

/* --- Sample use */

// Mutliplication data: 1 x 1 = 1, 1 x 2 = 2,.. etc

$data=array(0=>array(1,1,1),

1=>array(1,2,2),

2=>array(1,3,3),

3=>array(1,4,4),

4=>array(1,5,5),

5=>array(2,1,2),

6=>array(2,2,4),

7=>array(2,3,6),

8=>array(2,4,8),

9=>array(2,5,10),

10=>array(3,1,3),

11=>array(3,2,6),

12=>array(3,3,9),

13=>array(3,4,12),

14=>array(3,5,15),

15=>array(4,1,4),

16=>array(4,2,8),

17=>array(4,3,12),

18=>array(4,4,16),

19=>array(4,5,20),

20=>array(5,1,5),

21=>array(5,2,10),

22=>array(5,3,15),

23=>array(5,4,20),

24=>array(5,5,25)

);

// 1 x 1 =?

$testData=array(0=>array(1,1),

1=>array(1,2),

2=>array(1,3),

3=>array(1,4),

4=>array(1,5),

5=>array(2,1),

6=>array(2,2),

7=>array(2,3),

8=>array(2,4),

9=>array(2,5),

10=>array(3,1),

11=>array(3,2),

12=>array(3,3),

13=>array(3,4),

14=>array(3,5),

15=>array(4,1),

16=>array(4,2),

17=>array(4,3),

18=>array(4,4),

19=>array(4,5),

20=>array(5,1),

21=>array(5,2),

22=>array(5,3),

23=>array(5,4),

24=>array(5,5)

);

$layersSize=array(3,2,1);

$numLayers = count($layersSize);

// Learing rate - beta

// momentum - alpha

$beta = 0.3;

$alpha = 0.1;

$minX=1;

$maxX=25;

// Creating the net

$bp=new BackPropagationScale($numLayers,$layersSize,$beta,$alpha,$minX,$maxX);

$bp->Run($data,$testData);

?>

Diubah oleh Pangeransalju 17-05-2014 16:00

0

2.6K

Kutip

6

Balasan

Guest

Tulis komentar menarik atau mention replykgpt untuk ngobrol seru

Mari bergabung, dapatkan informasi dan teman baru!

Programmer Forum

20.2KThread•4.3KAnggota

Terlama

Guest

Tulis komentar menarik atau mention replykgpt untuk ngobrol seru